Media

Last month, RocketMill was invited to Google Marketing Live 2023 EMEA – Google’s annual event showcasing the latest ads product innovations. Across two days, we were hosted alongside other agencies and brands at Google’s Dublin HQ, to hear from keynote speakers and connect face-to-face with product teams and agency contacts.

AI was, of course, the top of the agenda, with the content broken down into two strands:

Traditional AI: Referring to the conventional approach of programming specific rules and instructions for AI systems to follow, Traditional AI is reliant on explicit instructions and predefined conditions to make decisions and perform tasks.

Generative AI: On the other hand, Generative AI involves training models which learn from data and generate new content. It focuses on patterns and structures within the data to create realistic outputs without explicit programming.

Throughout the event, we heard how Google is bringing both into their Ads platforms. One thing that resonated was that Google sees AI as the next big shift since mobile. Those who have been in the industry a while will remember the “year(s) of mobile” starting around 2010. Advertisers faced challenges as users’ behaviour fundamentally shifted to searching from mobile, having to quickly pivot away from desktop focused campaigns and websites.

Traditional AI – Building on what we know

Whilst “machine learning“ was previously front and centre of many Google Ads products, a change in language appears to have taken place. Machine learning has been uniformly retired in favour of the umbrella “AI” or “Powered by AI”. The key theme here was how AI (or machine learning) has already been at the heart of Google products over the past years – from smart bidding to Performance Max and broad match logic.

We heard more about the behind-the-scenes improvements to these tools, which can be hard to appreciate as they are not immediately apparent; we’ve been accustomed to tools like smart bidding, however the systems behind them have been constantly evolving since launch. This speaks to the culture of testing across accounts – nothing stands still, and whilst something may have not worked two or three years ago, changes in either the products themselves or the options open to advertisers means the ground may have shifted.

Another topic that came up was the importance of providing as many data signals as possible. Through providing a signal on exactly what is valuable to a business in the form of revenue (or a proxy value), tools such as smart bidding are better able to optimise towards the outcome we want. This can often be a challenge for lead gen clients (versus more traditional ecommerce, where revenue values are readily available).

The core takeaway here was how this more traditional AI will continue to develop and improve, with Google keen to understand the challenges advertisers are facing and how best their products can address them.

Generative AI – A new way of working

This was the most exciting part of the event, not only sharing more news on the Search Generative Experience (SGE) on the Search Engine Results Page (SERP), but also showing how generative AI will start to be baked into the Google Ads platform:

Search Generative Experience (SGE)

Whilst this was announced the week prior, we got to see more on how users can interact with this new way of searching for information.

This could be the start of a huge shift across both organic and paid search, and ultimately the way users find information via Google. Whilst Microsoft were first with AI Search and ChatGPT, Google has the market share that could accelerate this new way of searching.

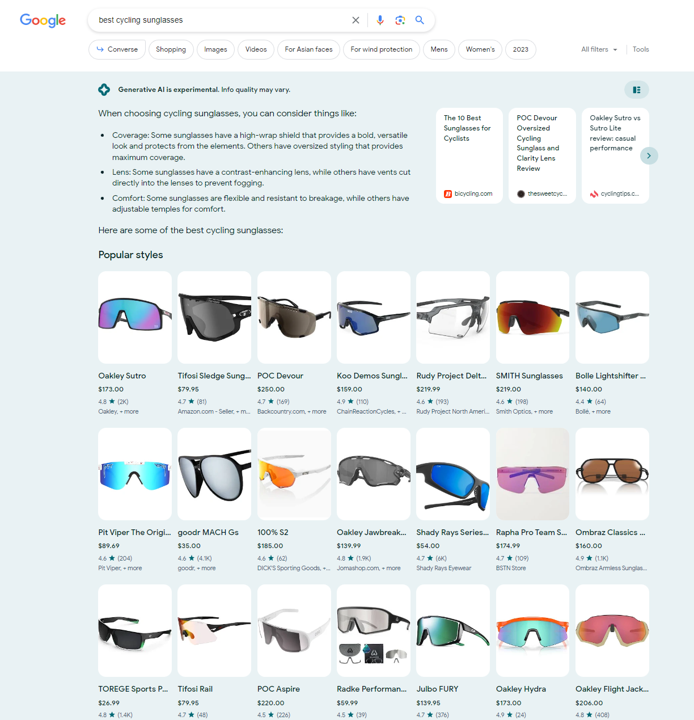

Exploring this new Search experience with Search Labs, I can see a large amount of images being returned for ecommerce queries, and a very different look to the SERP. Whilst these are all organic shopping listings for now, it represents a clear opportunity for Google to monetise:

It’s clear that ads will form part of the product, but the immediate focus will be on how useful people are finding SGE and making sure it gains traction versus its competitors, rather than a rush to monetise (perhaps similar to YouTube Shorts, which first launched in 2020 to compete in the short-from video space with Instagram and Meta, but was only properly monetised in February 2023).

Making the most of this new paradigm will require tools like Responsive Search Ads (RSA) – giving users the ability to ask follow-up questions. Delivery of an ad customised for the context will be key.

Conversational campaign creation

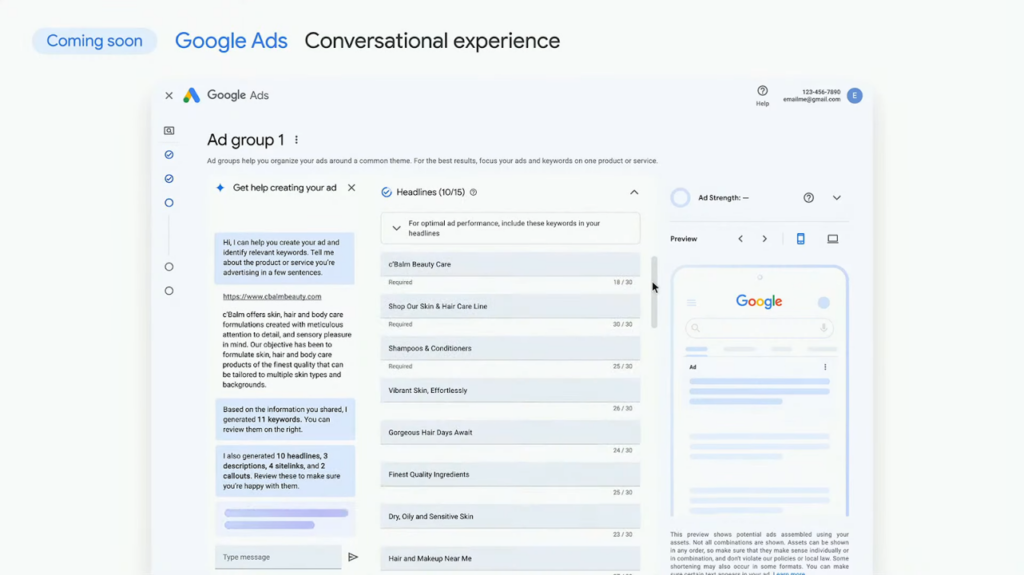

Outside of SGE, the highlight of the keynote was how we will be able to utilise generative AI during the campaign creation process:

This live demo for a skincare brand showed generative AI suggesting both keywords and ads based on site content, and the ability to input prompts to improve the output (in this case, calling out more specific USPs).

This is not entirely new – for a long time we have been able to drop a URL into the keyword planner and get a list of suggested keywords back, or have individual RSA assets suggested to us – but having this all in one place is new, as is the ability to go back and forth with AI, iterating on and improving the output.

Prompts will be key here in getting ideas back that will resonate with the target audience and avoid stale or rotate suggestions.

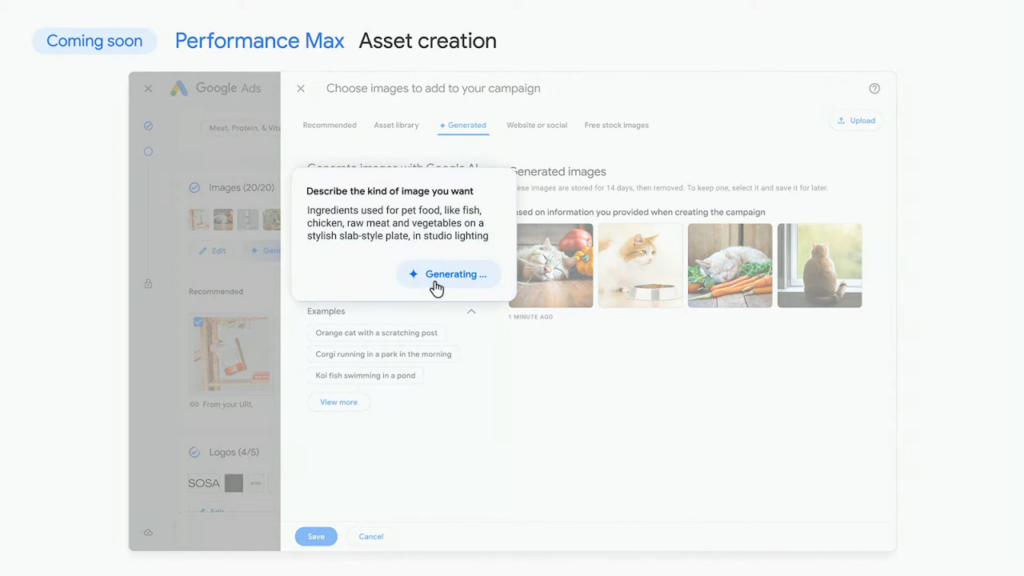

Performance Max asset generation

This was another impressive demo, embedding AI image generation (such as Stable Diffusion, which I looked at previously) directly into the UI.

It was interesting to see that the prompt being used was fairly short, but with the addition of “in studio lighting”. From exploring how Stable Diffusion could be used to create ads, getting the prompts right is (again) key to getting the desired output. It’s not known whether this model has been trained on a general data set or specifically to output visually appealing ad assets.

One immediate question this does raise is how happy brands will be to use AI generated imagery in theory campaigns. It’s likely many will reject the idea, so prior proof of the tool’s capability will be essential in gaining brands’ trust.

Whilst everyone will have access to this, it will be those who can quickly adapt and understand the right types of prompts that will see success, rather than simply asking the AI once and accepting the output. There was an interesting link to another announcement by Google, that they will be tagging images that have been AI generated – with no current indication of whether this will extend to these assets.

Generating multilingual voiceovers

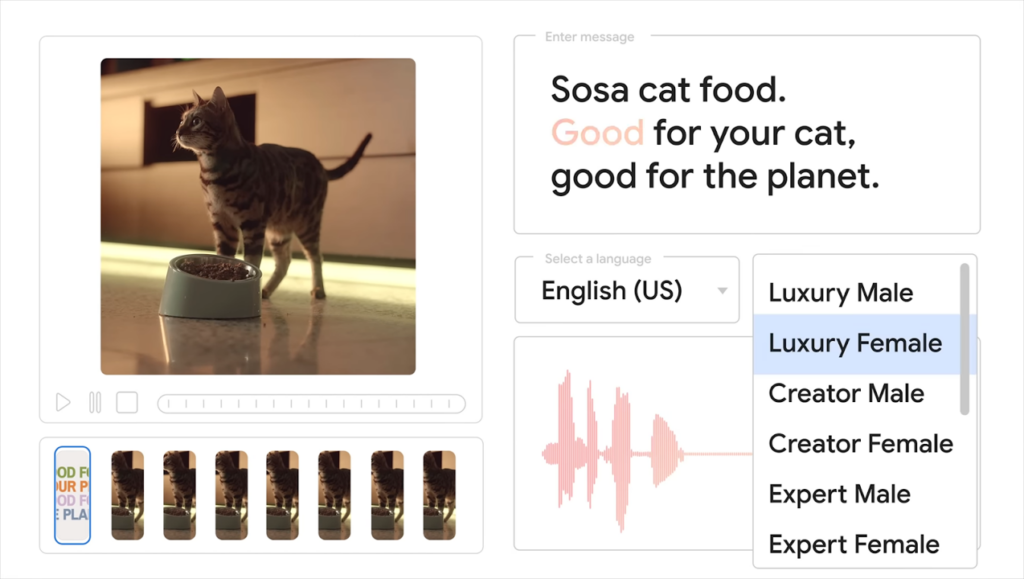

This was briefly touched upon during the sizzle reel released just after the keynote, showing many of the features above as well as speech synthesis for use in media creative:

In this very quick clip, we saw voices being generated in multiple languages and styles, to be overlaid on a video ad.

Whilst only a small snippet, this sounded quite far behind tech such as ElevenLabs. The fact this wasn’t expanded upon in the keynote itself suggests it’s in earlier development than some of the other tools.

The worry here would be that, even if the generated voice is high quality, the limited options will be a sticking point – once a brand reaches a certain size, it seems unlikely they’ll want to use a “default” voiceover that other smaller brands may also be using.

Human-centric marketing

To wrap up, the biggest takeaway from the event was that, whilst we have access to the most advanced tools we have ever seen in the Google Ads platform, nothing has changed in our core goal – to connect with potential customers.

Human creativity has always sat at the heart of advertising – AI powered products help give us the headspace to truly consider who our customers are, what they are looking for and how we can help them.

Stay tuned for more – or contact us with any questions.