SEO

Since ChatGPT was released at the end of last year, marketing professionals across channels have had a keen eye on how chatbot AI functionality could improve and streamline workstreams.

With the ability to seemingly provide answers to complex questions almost instantly, it’s no surprise that the release of this technology has unsettled the companies behind search engines. In the last few weeks, we have seen them accelerate their agenda for integrating chat AI functionality into their search platforms. Microsoft—who have invested heavily in OpenAI, the developers of ChatGPT—was first to act, bringing its planned integration with the platform forward from March to earlier this week, the 8th of February. Not yet available to all, the new, AI-powered version of Microsoft’s Bing search engine can only be accessed by a select list of logged-in testers.

With Google normally the front-runner in this space, how has the search giant responded? We take a look at everything we know so far about Google’s progress in innovating search through AI.

So, what’s the latest with Google?

Never far behind the curve, in an earnings call on the 2nd of February, Google announced Bard, its equivalent chatbot implementation of AI, based on their LaMDA language model. In a short notice presentation on the 9th of February, Prabhakar Raghavan, a Senior VP for Google, discussed how the chatbot functionality would be released to trusted testers on a light version of LaMDA within the week.

How will Bard inform search results

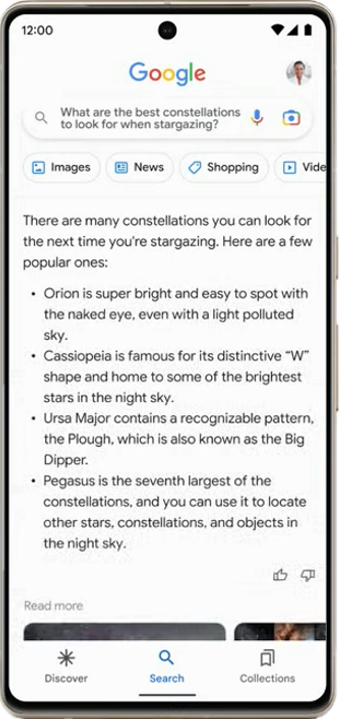

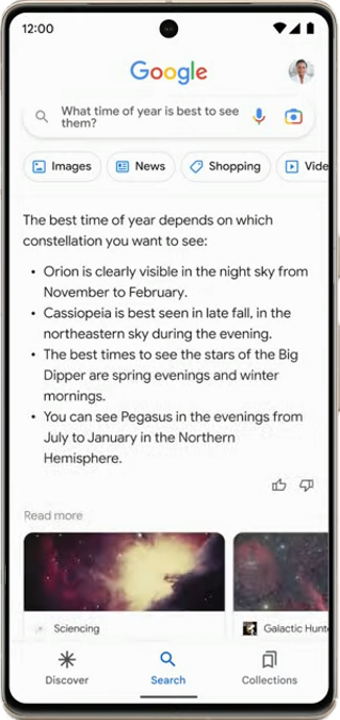

Bard will be integrated into search results to surface answers to complex queries that Google has coined “NORA” or No One Right Answer. In the presentation they demonstrated examples around “What are the best constellations to look for when stargazing?”

In this example, Google showcased an answer that may have previously been fulfilled with a featured snippet. The existing featured snippet answer would offer a link to the website from which it was pulled (for the purposes of brand awareness and an ongoing customer journey). However, with Bard, this has been replaced with a chatbot-generated answer that occupies the majority of the mobile screen.

As the answer to the query was generated, normal search results did appear to load underneath Bard’s answer, but were pushed down the page. These traditional search results were eventually replaced with a carousel of image and text links to related content. Under this was functionality similar to “People Also Ask”. When clicked, they took the user to another AI-generated result (as shown in the second screenshot).

The results have feedback options for reinforcement training (thumbs up or down). They do, however, lack a citation for the websites from which the content is pulled—if, in fact, it is pulled from the top results. The timeline for this being deployed in search results was defined as “soon”.

Google made a point of using “AI” as a term frequently in a shift away from previous, more technical language.

The presentation reinforced that AI has been embedded in Google products for several years and that they invented and shared the original Transformer technology that has trained subsequent models. This was a pointed reminder that AI is not new to Google—despite ChatGPT gaining recent headlines.

Searching with images

A key differentiating factor between Microsoft and Google, is Google’s ability to use images to conduct searches. Alongside upcoming enhancements to augmented reality, maps, arts and culture, and translation capabilities, image recognition technology, Lens was given the spotlight. With the ability to take a photo and use it as a seed for a search, Multisearch uses AI to understand a query and formulate an answer. For example, when searching for a clothing item or furniture in a similar style or different colour, the platform uses your uploaded image as a reference point to find a similar item.

This functionality is being expanded to allow for an image to be taken with “near me” added as a query, helping to find versions of that product in the searcher’s vicinity. The demonstrated version was a French pastry which when searched identified local results for bakeries.

How will this impact the future of Search?

Following Google’s announcement, and a subsequent Twitter ad for Bard which was proven to display inaccurate information, the market reacted with apparent disapproval, seeing Google’s share prices drop by $100 billion. But with less than a week passing since the news, what does this really mean for the tech giant and users of its platform?

- If Bard is integrated as implied, we will likely see the click-through rate for informational queries significantly decline. This is a result of searchers receiving a more thorough, tailored response to their query without visiting a website—in part because Google is obscuring an onward journey to brand/publisher websites. Despite this, Raghavan stated that they prioritise sending high-quality traffic to creators and influencers, emphasising that Google has increased the levels of traffic sent to websites every year— though it is not clear how this will be connected to Bard.

- The demonstration lacked ads, so there may also be a reduction in inventory. However, as Google’s biggest revenue stream it is unlikely it will launch a feature that reduces their ability to generate ad revenue.

- Microsoft/Bing have already deployed their solution, albeit in a restricted capacity. If this proves to be a better experience for searchers and has sufficient time to demonstrate value before Google’s Bard release, then we could see a shift in the market from Google to Bing. Optimisation for Bing would become a higher priority and achieve a better ROI than it currently does. In comparison, Bing’s version is more similar to traditional search results, with a side bar for AI-generated responses. This would therefore have less impact on click-through rate.

- Multisearch is increasing in usage and Google expects that to continue, saying “the camera is the new keyboard”. Understanding the value of being visible in image searches will be increasingly important.

- Google has faced legal challenges over the use of publisher content in its search results through featured snippets. If a publisher can prove that its content is being pulled into the AI response without suitable attribution, it’s likely these legal implications will intensify.

What actions can I take now?

For now, there aren’t any immediate changes to Google’s organic search results that will impact the majority of your search sessions. However, here are some actions to take now and in the near future to stay ahead:

- Look at trends around image search in Google Search Console to identify increased impressions or clicks from Lens.

- Review and optimise images to ensure they’re as eligible as possible for multisearch results.

- Review and optimise your Google Business Profile properties to ensure they’re as eligible as possible for augmented maps functionality.

- Monitor the split of traffic and revenue from Google and Bing when the new technologies deploy to see a) whether consumers are changing preferred search engines and b) what downstream impact that has on your performance.

- Feedback on AI-generated results when they’re seen via the ‘thumbs up/down’ option next to the result. This will help to train the models but has also been encouraged by Google’s John Mueller as a response to the outrage from SEO professionals that the results aren’t cited.

- Look for updates! This is an emerging technology and is in testing. Iterations are expected to be deployed quickly.

Google has remained largely unchallenged by its peers in recent years but Microsoft’s latest release requires Google to enhance its offering with pace to keep up. With a firm focus placed on “responsibility” throughout the presentation, and an indication that the extended time to market was in the interest of responsible deployment, only time will tell whether the extra time taken will result in a stronger offering.

We will be closely watching for updates, but please get in touch in the meantime if you need any support with your SEO strategy.