Marketing

Last month Google announced the beta release of Google Cloud Vision API. This allows anyone to submit images and access a number of features that provide information regarding the content on those images.

The following API features can be applied to an image in any combination:

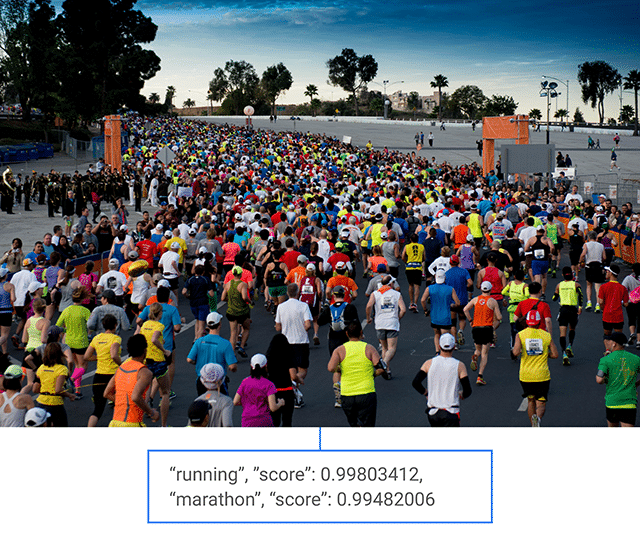

Label/Entity Detection – This highlights the main entity within in an image. If you send the API a picture of a banana, it will report back with a high score that it is likely a banana too. You can use this feature to build up metadata on your images which could be useful for image-based searches or recommendations.

Landmark Detection – It can also detect landmarks and identify popular natural and manmade structures, providing latitude and longitude of their location.

Landmark Detection – It can also detect landmarks and identify popular natural and manmade structures, providing latitude and longitude of their location.

Logo Detection – The API can also recognise logos and return the identified product brand logo.

Image Attribute Detection – The general attributes of the image, such as the dominant colour, can be provided.

Safe Search Detection – Powered by Google SafeSearch, this can detect different types of inappropriate content within an image. This feature enables developers and website managers to easily moderate uploaded images.

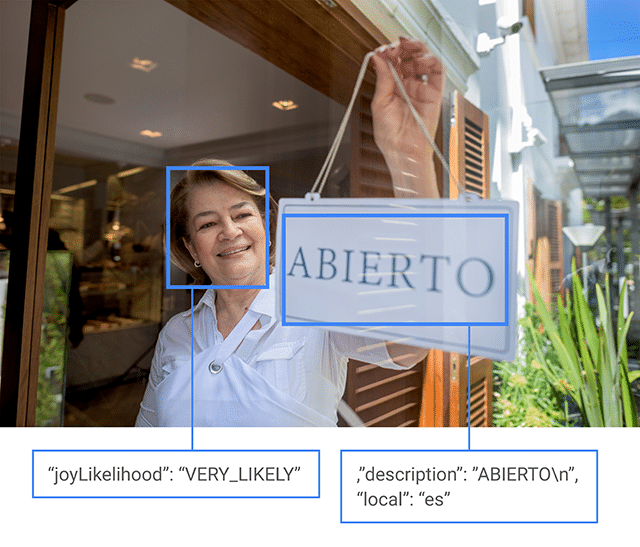

Optical Character Recognition (OCR) – This finds text within an image and identifies if it belongs to a language. As well as extracting and interpreting text, this will be useful for building your image catalogue’s metadata.

Facial Detection – The software can detect when a face appears in a photo and even recognise its sentiment, reporting simple responses such as joy or sorrow.

How do you get to use it?

Developers using the API can quickly analyse the content of an image from within their own applications by using a RESTful interface and JSON for both requests and responses – this is easier to code for, operate and maintain.

Novices can get started via the guide at https://cloud.google.com/vision/docs/getting-started which shows users what is needed and features a few examples so they can get to work quickly.

The service is priced per feature per month, with the first 1,000 API calls free. Prices range from $2.50-$5.00 after 1,000 units have been used, and they drop with increased usage. It is already considered attractive to many as the service is fast, accurate and easy to integrate.

What can marketers use it for?

Powered by the same technology as Google Images, the API is accurate and fast. It is relatively easy to operate and integrate.

Essentially, many of Google Vision’s features will pull information and insights which will be useful for websites that deal with images on a large scale.

An application that marketers would be interested in would be in the moderation of user generated content. It’s a scary leap of faith for marketers to allow their users to upload visual content. But if you’ve got Google’s Cloud Vision API scanning each image for anything rude or offensive, you can create a process to remove these images before they are exposed to your audience.

OCR as a service is likely to be popular for those in need of text extraction services. It’s very accurate and reliable, even taking on handwriting to a reasonable degree. 60 cents for 1000 images is a very attractive offering for most websites.

Of particular note is the way in which this level of machine intelligence can be adapted for other applications, particularly for robotics which are based on simple artificial intelligence. For example, in the Cloud Vision API video below you can see them demonstrate this with a Raspberry Pi robot using only a few hundred lines of code to communicate with the API. The robot can roam, report what it sees and even identify smiling faces.

What do you think about Google Cloud Vision? Will it make a difference to the way you use images in your work? Let us know via Twitter at @RocketMill.